by Patrix | Sep 28, 2025

If you’ve ever heard someone described as a “Stepford Wife,” you know what it means. The phrase comes from Ira Levin’s 1972 novel The Stepford Wives (and later the 1975 movie), which imagined a suburban Connecticut town where the women were replaced by eerily perfect, compliant robot replicas. Beneath the polished hair and polite smiles was a chilling truth: individuality and dissent had been erased in favor of mechanical harmony.

I recently discovered a community that seems to have created and nurtured this type mentality on it’s own; naturally, without any intervention of AI. (As far as I know.)

Today, when we talk about AI, we’re often worried about surveillance, job loss, or runaway superintelligence. But another risk lurks in the cultural shadows: the possibility that AI could become a kind of Stepford force, smoothing away rough edges, standardizing behavior, and nudging us toward bland perfection. And what’s even more unsettling is that we may not need robots at all—some American communities already function like natural Stepford experiments.

AI as a Conformity Machine

AI excels at optimization. Algorithms are built to predict what we want, what we’ll click, what will make us stay on the app, or what product we’re most likely to buy. That optimization flattens us into predictable patterns. A feed full of AI-curated content can start to feel like a Stepford neighborhood, everyone watching the same shows, parroting the same opinions, wearing the same “best-selling” jacket an e-commerce engine recommended.

Large language models are trained on massive datasets, which means they tend to generate the most statistically probable, “safe” answers. This is useful for clarity, but it can also have the unintended effect of reinforcing norms and sanding off eccentricities. Imagine a future where personal AI assistants manage not just your calendar and shopping lists, but also your dating profiles, political talking points, or even your conversations with friends. If everyone’s assistant leans toward the same optimized tone, society could slip into a homogenized script. We’d all sound like Stepford versions of ourselves.

The Allure of Perfection

The Stepford fantasy wasn’t just about control; it was also about desire. The men in the story didn’t want messy, complex, fully human partners; they wanted idealized, uncomplaining companions. In our era, AI companions, virtual influencers, and digital girlfriends/boyfriends are growing industries. They’re responsive, affirming, and endlessly available. The danger is that the more time people spend with AI “partners” who never argue, age, or demand compromise, the less patience they may have for real, complicated humans.

This isn’t a far-off sci-fi idea. If you scroll through communities around AI companions, you’ll already find people saying their chatbot “partner” feels more reliable than their spouse. It raises a Stepford-like possibility: what happens when society prefers optimized, synthetic relationships over the unpredictable, inconvenient messiness of human ones?

Stepford Without Robots: Real-World Parallels

Before we blame AI for this, it’s worth noticing that Stepford-like communities already exist without technology. Certain suburban enclaves, retirement villages, and gated developments in the U.S. cultivate a striking uniformity. Drive through some of these neighborhoods and you’ll see nearly identical homes, matching lawns, even synchronized seasonal decorations. The social norms can be equally rigid; everyone goes to the same churches, votes the same way, plays at the same tennis clubs, and ostracizes those who don’t fit in.

This isn’t inherently sinister; humans are tribal creatures who like belonging. But there’s a thin line between community and conformity. In towns where deviation is discouraged, you end up with something close to a Stepford effect: the appearance of harmony masking the quiet pressure to comply. No robots required.

Sociologists sometimes call this “cultural homogeneity,” and it shows up in more than just white-picket-fence suburbs. It can be found in tightly bound religious communities, affluent gated communities, or even “intentional living” developments that tout sustainability and minimalism. Everyone’s smiling, everyone’s agreeable—but individuality quietly erodes.

The Stepford–AI Feedback Loop

What happens when AI tools amplify these already-existing tendencies? A homogenous community that uses the same AI tutors, the same AI writing assistants, and the same AI shopping algorithms may find its cultural uniformity intensified. Instead of just looking alike, people could start to think alike, guided by algorithms that reward the same language, values, and styles. Over time, dissent could feel not just socially costly but algorithmically irrelevant.

Everyday examples

- Schools: AI essay graders might favor “clear, structured” writing, punishing more experimental or quirky voices.

- Dating: AI-optimized profiles could push everyone toward the same attractive clichés, making uniqueness less visible.

- Politics: AI-curated feeds might reinforce echo chambers, filtering out nuance and disagreement until only Stepford-approved narratives remain.

The Stepford scenario, then, isn’t about robots replacing us with mechanical clones. It’s about technology reinforcing our existing hunger for conformity until individuality feels like an error in the system.

A Step Beyond Stepford?

Here’s the unsettling thought: Stepford may not just be a metaphor. AI has the potential to create personalized “versions” of us that function in society on our behalf; digital clones trained on our data. Imagine your AI personal assistant scheduling your calls, answering your emails, even chatting with friends. Over time, that assistant might become the “you” people prefer, because it’s a smoother, less complicated version. That’s Stepford 2.0: not robot wives, but algorithmic proxies.

The real question isn’t whether AI will cause a Stepford society. It’s whether we’ll choose to let it. After all, conformity has always been tempting. Technology just makes it easier, faster, and harder to notice.

Keeping the Humanity in the Loop

The antidote to Stepford thinking isn’t paranoia—it’s cultivation of individuality. AI doesn’t have to strip away human messiness if we actively protect it. Consider a few practical habits that keep creativity and dissent alive:

- Prompt for divergence: Ask AI tools to present outlier perspectives and minority viewpoints, not only the “most likely” answer.

- Value pluralism: Seek communities that reward difference, creativity, and dissent. Treat friction as a sign that something real is happening.

- Keep the mess: In relationships, remember that the “inconvenience” of human emotion is where depth comes from. Don’t let frictionless AI companionship replace hard-won intimacy.

- Audit your feeds: Periodically reset algorithms, subscribe to unfamiliar creators, and intentionally add noise to avoid a sterile, optimized bubble.

- Teach style, not templates: In education and the workplace, use AI to model multiple styles and voices rather than funneling everyone into a single rubric.

The Stepford story endures because it warns us what happens when comfort outweighs authenticity. In an AI-saturated world, that lesson may be more relevant than ever. We can use these tools to explore, question, and diversify our perspectives; or we can let them sand us down until we fit the mold.

The choice, at least for now, still belongs to us.

by Patrix | Sep 26, 2025

Every so often, the internet throws us something so oddly specific, so strangely irresistible, that it ricochets across social media feeds before anyone has time to ask, “Wait, why are we all doing this?”

In September 2025, that “something” was the so-called Nano Banana effect — a viral AI filter that transforms everyday selfies into dreamy, hyper-stylized portraits of people wearing elegant sarees. Almost overnight, Instagram, X (Twitter), and WhatsApp groups filled up with friends and strangers alike draped in digital silk, looking as though they’d just walked out of an art-house film.

It may sound like just another passing internet fad, but the AI saree trend has tapped into something deeper. It’s not only about playing dress-up with technology; it’s also about how people see themselves, how AI is shaping beauty standards, and what “authenticity” means in an era of effortless transformation.

What Is the “Nano Banana” AI Saree Trend?

The phrase “Nano Banana” itself is as bizarre as it is catchy. The name comes from a quirky labeling inside Google’s Gemini Nano system, which some users noticed while experimenting with AI photo editing. Developers and meme-makers seized on the name, and before long it became shorthand for an effect that draped digital sarees over user photos.

Here’s how it works:

- A user uploads a selfie into the Gemini Nano interface (or other apps that quickly adopted the filter).

- The AI reimagines the person wearing a saree, often with stylized lighting, jewelry, and a cinematic backdrop.

- The results are shared widely, both because they look stunning and because they carry that irresistible blend of novelty and cultural resonance.

People who might never have worn a saree in real life suddenly found themselves experimenting with the look virtually. For many in South Asia and the diaspora, the trend felt celebratory — like a digital festival where everyone could play a part.

Why Did It Go Viral?

1. Universality with a Twist

Unlike niche filters that target small subcultures, sarees have a broad cultural resonance. They’re traditional, glamorous, and recognizable around the world. Even if you’ve never worn one, you know what one is. AI gave people a low-effort way to try it on.

2. Aesthetic Quality

The filter doesn’t just slap a saree PNG onto your shoulders. The AI generates soft lighting, artistic textures, and an almost painterly finish. It flatters people in a way that most social filters don’t, making participants feel beautiful.

3. The Meme Factor

The absurdity of the name “Nano Banana” added just enough humor to make the trend playful. People weren’t only sharing their AI saree portraits because they looked good; they were also in on the joke.

4. Accessible Technology

Unlike earlier viral AI fads that required hefty computing power, this one worked on smartphones. Accessibility supercharged participation. If grandma could try it on her phone, so could everyone else.

What This Says About Visual Culture

AI as a Mirror of Desire

Filters like this aren’t neutral. They reflect our collective fantasies — about elegance, beauty, nostalgia, or cultural connection. When millions of people choose to see themselves in a saree, it highlights both personal curiosity and broader cultural appreciation (or appropriation, depending on who you ask).

Democratization of Aesthetics

In the past, getting a glamorous saree portrait required a photographer, stylist, and wardrobe. Now it requires about 15 seconds and an internet connection. That’s democratization in action: tools once reserved for fashion shoots are now in the hands of everyday users.

The Question of Authenticity

Of course, there’s also discomfort. When AI puts cultural dress on people who’ve never worn it, does it trivialize tradition? Or does it extend it into new digital realms? Opinions differ. What’s clear is that AI is blurring the line between authentic expression and imaginative play.

The Double-Edged Sword of Virality

Privacy Risks

As with all viral filters, users are handing over selfies to AI platforms. That means sensitive biometric data (like faces) is being stored and processed. Fun today, but what about tomorrow?

Flattening Culture

Cultural garments like sarees have rich, specific histories. When reduced to a generic AI overlay, some argue they risk becoming aesthetic wallpaper rather than respected traditions.

Reinforcing Beauty Norms

While the filter flatters, it also standardizes. Many users noticed that the AI tended to lighten skin tones, smooth features, and apply Eurocentric beauty standards. In other words, even in a saree, the AI “ideal” is not always culturally accurate.

Why the Name Matters

It may seem silly, but the name “Nano Banana” played a huge role in the spread of the trend. Internet culture thrives on absurdity. A filter called “Elegant Saree Generator” might have attracted modest attention. But “Nano Banana”? That’s meme fuel. The name gave people permission not to take it too seriously, which made sharing easier.

This reminds us that virality often depends as much on framing as on substance. The same tech, with a boring label, might never have made headlines.

What Comes Next?

If history is a guide, the AI saree trend will fade, just like the “AI baby face” craze, the “yearbook photo” wave, or the “anime selfie” boom. But its cultural footprint matters. It shows:

- AI fashion filters are here to stay. Expect more culturally specific dress-up filters. Kimonos, kilts, Victorian gowns — the library will grow.

- Identity is increasingly fluid. People are willing to try on looks, traditions, and identities in digital spaces without commitment.

- Visual culture is accelerating. Trends used to last years, then months, now sometimes only weeks. The pace of AI-driven aesthetics is only speeding up.

For digital artists and creators, the takeaway is clear: AI is no longer just a tool; it’s a cultural engine, producing aesthetics and narratives at unprecedented speed.

That’s the power, and the danger, of these tools. They let us see ourselves in ways we might never otherwise imagine. Sometimes that’s liberating. Sometimes it’s unsettling. But either way, it’s a window into how AI isn’t just shaping our images — it’s shaping our sense of self.

by Patrix | Sep 2, 2025

Artist residencies have always been a bit magical. They’re those rare stretches of time when an artist gets to step out of the noise of daily life and dive into their work, supported by space, resources, and a sense of community. For centuries, they’ve been hosted in forests, converted barns, and quirky urban lofts. But now there’s a new twist: residencies where the central “studio mate” isn’t another painter or poet, but an artificial intelligence system.

Welcome to the world of AI art residencies—a place where human creativity and machine learning share the canvas.

What Makes an AI Art Residency Different?

At their core, AI art residencies take the traditional framework of a retreat and superimpose a new collaborator: artificial intelligence. Instead of just providing a studio and a stipend, these programs give participants access to high-powered computers, advanced AI models, technical mentors, and communities of artists all wrestling with the same question: what happens when creativity meets code?

The difference is subtle but profound. Traditional residencies focus on solitude or natural inspiration. AI residencies focus on interaction—with a system that can generate thousands of images in seconds, respond in unpredictable ways, and force the artist to redefine what it means to make something “original.”

Why Now?

It’s no accident that AI art residencies are blossoming in 2025. Over the last few years, generative AI systems like MidJourney, DALL·E, and GPT have exploded into public consciousness. Suddenly, anyone can type in a prompt and get an image, a poem, or even a song.

That accessibility is exciting—but it also raises questions. Who owns the art? What biases are baked into the models? Is the artist still the author if the machine provides the brushstrokes?

Residencies are emerging as the perfect container to explore these questions. They offer time, space, and mentorship to dig beneath the surface, to go beyond quick prompts and memes, and to push the boundaries of what’s possible with AI as a medium.

Real-World Examples

- Runway’s AI Film Residency: Focused on filmmaking, it allows participants to experiment with generative video tools for storytelling.

- Google Artists + Machine Intelligence (AMI): A longstanding initiative pairing artists with researchers to explore the cultural impact of AI.

- AIxDesign Residency in Europe: Brings together artists, designers, and activists to prototype new futures—and critique the ones we’re heading toward.

- Smaller independent collectives are also offering residencies, sometimes run on shoestring budgets, where the main offering is GPU access and peer-to-peer learning.

What unites these programs is not the specific output but the sense of collaboration with an alien partner—a system that doesn’t know beauty or meaning until humans interpret its patterns.

What Do Artists Actually Make?

The outcomes vary wildly, but here are some common directions:

- Generative aesthetics: Pushing visual AI tools to make surreal or hauntingly detailed works.

- Performance art: Staging live shows where AI generates text, visuals, or music in real time.

- Critical design: Projects that reveal AI’s biases or limitations, turning flaws into creative material.

- Cross-disciplinary hybrids: Pairing AI with sculpture, biology, or even food to expand what “digital art” can mean.

One artist described the experience as “collaborating with a slightly unhinged but endlessly productive studio partner.” Sometimes the AI suggests nonsense, sometimes it sparks genius, and often the magic is in the dialogue between the two.

Why It Matters

For artists, these residencies provide something increasingly rare: the chance to shape the narrative of technology rather than just react to it. Too often, AI is framed only by engineers and corporations. By placing artists in the mix, residencies encourage play, critique, and alternative visions.

There’s also a practical benefit. Residencies provide access to tools and computing power that would otherwise be out of reach for most individuals. Training or running advanced models can be expensive, and residencies lower that barrier.

And finally, they expand the very definition of art. Just as photography and film once forced society to rethink what counts as artistic expression, AI is pushing us into new territory. Residencies are where the most thoughtful experiments are taking shape.

A Metaphor for the Moment

Imagine the classic artist retreat: a cabin in the woods, surrounded by birdsong, a canvas propped against the window. Now swap the forest for a server room, the birdsong for the low hum of GPUs, and your quiet studio mate for a machine that speaks in code and images.

It may sound sterile, but the resonance is the same: a place to explore, to get weird, to test boundaries. And just like the forests of old, this new environment is fertile ground—only now the soil is digital.

Why ArtsyGeeky Readers Should Care

For the curious, semi-tech-savvy readers who want to stay connected to both creativity and modern technology, AI art residencies are a glimpse into the near future. They’re not just for coders or gallery insiders. They show how anyone with curiosity and imagination might enter into dialogue with the tools of tomorrow.

Whether you’re a retiree with time to explore new outlets, a digital artist eager to expand your practice, or simply someone who wonders how AI will shape culture, residencies are where those questions are being asked—and sometimes answered.

And who knows? The next great residency might be open to applicants like you.

by Patrix | Aug 25, 2025

Most folks picture coding as typing inscrutable symbols at 2 a.m., praying the error goes away. Vibe coding offers a friendlier path. Instead of wrestling with syntax, you describe the feel of what you want—“cozy recipe site,” “retro arcade vibe,” “calm portfolio gallery”—and an AI coding assistant drafts the code. You steer with words, not semicolons.

It feels like jazz improvisation rather than marching-band precision. You hum the tune, the system riffs, and together you find something that works.

What exactly is vibe coding?

Traditional programming demands exact instructions. Misspell “color” in CSS and the styles vanish. Vibe coding flips that expectation. You set intent and tone in natural language and let an AI model generate the scaffolding—HTML, CSS, JavaScript, even backend stubs. You don’t memorize every rule; you curate the experience.

Example prompts a creator might use:

- “Make me a minimalist landing page with soft earth tones, large headings, and a gentle fade‑in on scroll.”

- “Design a playful to‑do app with hand‑drawn icons and a doodle notebook feel.”

- “Build a one‑screen arcade game where a frog hops across floating pizza slices.”

If the first result isn’t quite right, you iterate conversationally: “Make it warmer,” “simplify the layout,” “use a typewriter font,” “slower animation.”

Why creatives and retirees love it

Vibe coding lowers the barrier between idea and execution. If you can describe a mood, you can prototype a site or app. That’s huge for artists, writers, gardeners, cooks, and curious retirees who have projects in mind but don’t want to wade through arcane tutorials. It turns software creation into something closer to sketching: quick, expressive, and forgiving.

A tiny anecdote: the first time I tried vibe coding a recipe card, I typed, “Give me a farmhouse kitchen vibe with wood textures and big photo slots.” The AI delivered a working layout in seconds. I nudged it—“larger headings,” “cream background,” “add a whisk icon to the search bar”—and the page snapped into place. No CSS rabbit holes, no plugin tangles.

How it works in practice

Think of a three‑step loop:

- Describe the outcome

Explain the feel, audience, and basic features: “A gallery portfolio for a mixed‑media artist, moody lighting, grid layout, lightweight animations, and an about section.” - Let the AI draft the code

The assistant produces structured files. Many tools can also scaffold assets, placeholders, and simple interactions. - Refine by vibe

You nudge the tone rather than micromanage hex codes: “More airy,” “less shadow,” “rounded corners,” “slower scroll.” The AI regenerates targeted parts of the code.

A 10‑minute vibe‑coded mini‑project (try this)

Goal: A single‑page “digital zine” for a weekend workshop.

Prompt 1:

“Create a one‑page site called ‘Saturday Zine Lab.’ Friendly, zine‑like typography, off‑white background, wide margins, and a collage feel. Include three sections: About, Schedule, and What to Bring. Add subtle paper texture, large headings, and a footer with contact links.”

Prompt 2 (tweak the feel):

“Make the headings chunkier, add a gentle hover wiggle on links, and use a simple grid for the Schedule with times on the left. Keep the performance light.”

Prompt 3 (final polish):

“Improve readability with 18px body text and 1.6 line height. Increase contrast slightly, and add a tiny torn‑paper divider between sections.”

Load the result and you’ve got a charming page ready for real content, assembled by describing the mood.

Why vibe coding is trending now

- Better AI models: Modern coding assistants interpret fuzzy language far more reliably than earlier tools. They can bridge the gap between intention and implementation.

- A hunger for playful creation: After years of “AI for spreadsheets,” people want tools that feel like paintbrushes. Vibe coding rewards curiosity.

Upsides you’ll notice

- Accessibility: You can build without mastering syntax. A good prompt becomes your design brief.

- Speed: Prototypes move from idea to screen in minutes, which is perfect for testing concepts.

- Creative flow: You stay in the “what it should feel like” headspace, instead of context‑switching into error logs.

Trade‑offs to consider

- Hidden complexity: Generated code may be messy or heavier than necessary. Great for personal projects, not always ideal for long‑term maintenance.

- Black‑box risk: If you don’t inspect the output, you might ship inefficiencies or minor security issues.

- Skill dilution: If you rely entirely on vibe, your debugging muscles won’t grow. A healthy balance helps—treat the AI as a collaborator, not a crutch.

Practical safeguards

- Ask for clarity: “Use semantic HTML,” “limit external dependencies,” “explain unusual code choices in comments.”

- Keep it small: Favor lean components and minimal libraries.

- Inspect once: Glance through the output, especially forms, scripts, and any place user input is handled.

- Version control: Save each iteration, so you can roll back if a regeneration goes sideways.

No‑code vs vibe coding

No‑code platforms give you templates and drag‑and‑drop building blocks. Vibe coding generates original scaffolds from your description. The difference feels like choosing from a menu versus asking a chef to make something that matches your taste. Both are useful; vibe coding just offers more room to invent.

Where this could go next

- Personal memoir sites: “Scrapbook style, Polaroid frames, large pull quotes, gentle page turns.”

- Art portfolios: “Gallery feel, spotlighted images, keyboard navigation, minimal chrome.”

- Micro‑business pages: “Simple services page, friendly pricing cards, contact form, warm colors, accessible and fast.”

- Playful experiments: “Underwater jazz‑club website”—because creativity grows when you let yourself play.

The bigger picture

Vibe coding doesn’t replace engineering; it widens the on‑ramp. Professionals will still refine, optimize, and secure systems. But more people will finally ship the projects they’ve imagined for years. That’s a cultural shift: from “I wish I could build that” to “I can try this today.”

If the internet’s first phase rewarded precision, the next phase might reward intention. When you can tell a computer how something should feel, you free up energy for ideas—and that’s where the fun begins.

by Patrix | Aug 8, 2025

Open-weight AI Models have arrived! OpenAI just released something that feels a bit like a plot twist: their first open-weight model family in over five years. It’s called gpt-oss, and if you’ve been waiting for a powerful, transparent, and commercially usable large language model—this one might just be your new favorite toy.

So what is it, why does it matter, and how can you actually use it?

What is gpt-oss?

At the heart of the release is gpt-oss-120b, a 117-billion parameter model using a Mixture-of-Experts (MoE) architecture. That means instead of having all its neurons fire at once (as dense models do), it only activates 4 out of 128 “experts” per layer for each token. Think of it like having a panel of 128 specialists, and calling on the 4 most relevant ones for each word.

That makes gpt-oss-120b both powerful and efficient. It delivers performance on par with OpenAI’s internal o4-mini model—but can run inference on a single 80 GB GPU. That’s a big deal.

There’s also a smaller sibling, gpt-oss-20b, with around 21 billion parameters and designed to run on desktop GPUs with just 16 GB of VRAM. This one’s a great fit for local deployments and smaller custom AI tools.

Why This Release Matters

Here’s the kicker: OpenAI hasn’t released open weights since GPT-2 in 2019. That means for half a decade, they’ve kept their most powerful models tightly guarded—understandable, but frustrating for developers and researchers who wanted to tinker, fine-tune, and self-host.

gpt-oss changes that.

It’s released under the Apache 2.0 license, which is remarkably permissive. You can use it commercially, modify it, retrain it, and even ship it as part of a product—without OpenAI looking over your shoulder.

This opens the door for startups, indie developers, educators, and researchers to build powerful, privacy-respecting tools with serious reasoning capabilities.

Transparent Reasoning, Tested Safety

One of the more impressive features: visible chain-of-thought (CoT) reasoning. That means you can ask the model to “show its work” as it solves problems, thinks through logic, or reasons its way to an answer. It’s a huge plus for applications in education, healthcare, law, and anywhere else where AI transparency is non-negotiable.

OpenAI also ran the model through their Preparedness Framework, testing for biosecurity and cybersecurity misuse risks—even under adversarial fine-tuning. The model did not show dangerous capabilities under those conditions. So it’s not just powerful—it’s responsibly released.

Performance in the Wild

OpenAI says gpt-oss-120b holds its own on serious benchmarks:

- Competitive programming (Codeforces)

- Math challenges (AIME)

- Medical reasoning (HealthBench)

- Tool-using workflows like Python scripting and web searches

And despite being a sparse MoE model, it can do all this on a single 80 GB GPU. You could run it on-prem, or spin it up in the cloud using platforms like Azure, AWS, Hugging Face, or Databricks. Several of these partners are already deploying the model natively.

Why Developers Should Care

This isn’t just a research toy—it’s a production-ready LLM. A few reasons you might want to play with it:

- Efficiency at scale: The MoE structure means you get 117B-parameter performance without 117B-parameter costs.

- Customizable reasoning depth: You can select low, medium, or high-effort chain-of-thought modes to balance accuracy vs latency.

- Truly open weights: Download it. Fine-tune it. Ship it.

- Cloud and local options: From a workstation to a cluster, you’ve got deployment flexibility.

The Specs (in Plain English)

| Feature | gpt-oss-120b |

|---|

| Total Parameters | ~117 Billion |

| Transformer Layers | 36 |

| Experts per Layer | 128 |

| Active Experts per Token | 4 |

| Architecture | Sparse Mixture-of-Experts (MoE) |

| GPU Requirement | Single 80 GB GPU |

| License | Apache 2.0 (open for commercial use) |

| Chain-of-Thought Output | Transparent and configurable |

| Safety Testing | Passed adversarial misuse evaluations |

A New Chapter for OpenAI?

OpenAI releasing gpt-oss under open weights might be one of the more quietly transformative moves in the AI ecosystem this year. It brings them into closer philosophical alignment with the open-source movement—while still maintaining some safeguards around training data privacy.

Whether you’re building a personal coding assistant, a healthcare chatbot, or an internal enterprise tool, gpt-oss gives you a transparent, fast, and smart foundation to build on.

And this time, it’s yours to keep.

Want to explore what gpt-oss can do? Stay tuned—we’ll be publishing fine-tuning guides, use case experiments, and performance comparisons right here on ArtsyGeeky.

by Patrix | Aug 4, 2025

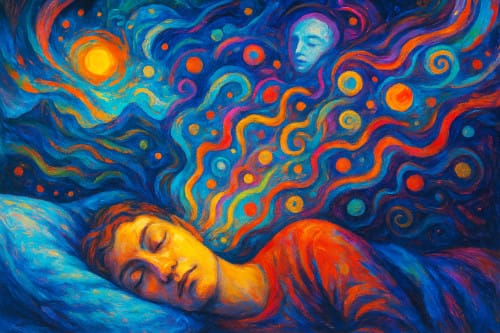

Ever had that dreamy moment when you’re just starting to doze off, and suddenly your mind floods with strange images, sounds, or ideas that seem to come from nowhere? Maybe it felt like falling, or maybe you heard your name called out from the void—only to realize you’re still half-awake. Welcome to the weird and wonderful world of hypnagogia, the twilight state between wakefulness and sleep.

It’s a mental borderland where creativity blossoms, logic loosens, and the subconscious starts stretching its legs. Artists, inventors, and philosophers have long dipped into this semi-dream state for inspiration. And now, in a world driven by sleep science, cognitive hacking, and AI, hypnagogia is making a comeback.

What Is Hypnagogia?

Hypnagogia (pronounced hip-nuh-GO-jee-uh) refers to the transitional state your brain enters as you fall asleep. It’s the soft descent from conscious awareness to unconscious dreaming. Unlike REM sleep (when dreams get cinematic), hypnagogia tends to be more fragmentary, fleeting, and surreal—like the mind whispering to itself just before the lights go out.

Neuroscientifically, it’s marked by changes in brainwave activity: your alert beta waves start to give way to slower alpha and theta waves. You’re no longer fully awake, but not quite asleep either.

This state is often rich in:

- Visual hallucinations: Shapes, colors, faces, landscapes

- Auditory distortions: Echoes, single words, music, or whispers

- Physical sensations: The infamous “falling” feeling or sleep starts (called hypnic jerks)

- Creative thoughts: Sudden insights or strange mental associations

In other words, hypnagogia is a temporary suspension of the rules—your usual mental filters go offline just long enough for your inner world to get weird.

History’s Most Famous Half-Asleep Thinkers

Many brilliant minds throughout history have tapped into the hypnagogic state as a wellspring of creative insight.

- Salvador Dalí used what he called “slumber with a key”: he’d nap in a chair while holding a metal key over a plate. As he drifted off and dropped the key, the clatter would wake him—allowing him to grab whatever surreal images floated through his mind.

- Thomas Edison reportedly used a similar technique with ball bearings, a chair, and a tin plate. He believed this liminal state was the gateway to his best ideas.

- Mary Shelley, author of Frankenstein, described seeing her famous monster in a waking dream—not quite asleep, not quite awake.

- August Kekulé, a 19th-century chemist, had a hypnagogic vision of a snake biting its own tail—which led him to realize the ring structure of the benzene molecule.

What Happens in the Brain During Hypnagogia?

Brain imaging studies show that during hypnagogia, activity in the default mode network (DMN) ramps up. This part of the brain is associated with daydreaming, self-reflection, and internal narrative. Simultaneously, areas responsible for sensory processing remain semi-active, which explains why hypnagogic visions and sounds feel so vivid.

It’s also a time when executive function—the brain’s taskmaster—lets down its guard. That’s why your thoughts might jump from an old memory to a strange image to a new idea, all in a matter of seconds. It’s nonlinear, associative thinking at its finest.

Can You Use Hypnagogia for Creativity?

Yes—and people are doing it.

Some creatives actively try to induce hypnagogia through intentional napping, meditation, or lucid dreaming techniques. Here are a few practical ways to experiment:

1. Hypnagogic Journaling

Lie down with a journal nearby. As you begin to doze, try to stay aware of the images or phrases that come to mind. The moment you jerk awake or stir, jot down anything you remember—no matter how odd.

2. The Dalí Method (with a modern twist)

Try holding a small object—like a spoon or coin—in your hand while resting in a chair. Place a metal or ceramic plate underneath. As you drift off and drop the object, the sound will wake you. Capture whatever you saw or thought about.

3. Audio Triggers

Some people use soft ambient music, binaural beats, or even AI-generated soundscapes to help ease into the hypnagogic zone. Apps like Endel, Brain.fm, or YouTube’s sleep music loops can help coax the brain into theta territory.

Why Hypnagogia Matters in an Age of Hyper-Productivity

In a culture obsessed with productivity hacks, attention spans, and the “optimization” of every waking moment, hypnagogia is a gentle rebellion. It’s a reminder that creativity doesn’t always emerge from grinding harder—it can come from surrender, softness, and liminal mental space.

AI is increasingly being trained to simulate aspects of human creativity, but it’s the irrational, fluid, dreamlike logic of states like hypnagogia that remain uniquely human—for now. In fact, some researchers are studying hypnagogic imagery as a model for how future AI might mimic human associative thinking.

At the same time, scientists are looking into the potential therapeutic benefits of this state. Some early work suggests it might help in processing trauma, enhancing memory, or even supporting problem-solving during sleep onset.

Next time you’re nodding off and a bizarre image flashes across your mind—don’t shrug it off. That may just be your subconscious offering up a sliver of wisdom wrapped in weirdness.

Hypnagogia is a liminal zone, a soft corridor between two worlds. You don’t have to sleep through it. You can explore it, learn from it, and maybe even create something wonderful while dancing on the edge of dreaming.